What is it?

Gemma 3 is Google’s family of lightweight, open-source LLMs built alongside Gemini 2.0. Gemma 3 models come in four levels of parameters (1B, 4B, 12B, and 27B). They’re designed to be efficient and high-performing, and as non-reasoning models, they excel at pattern-based tasks. Gemma 3 models have multimodal capabilities and a 128K context window, and they can even be deployed locally or integrated into custom applications.

You can try Gemma 3 in your browser at Google AI Studio.

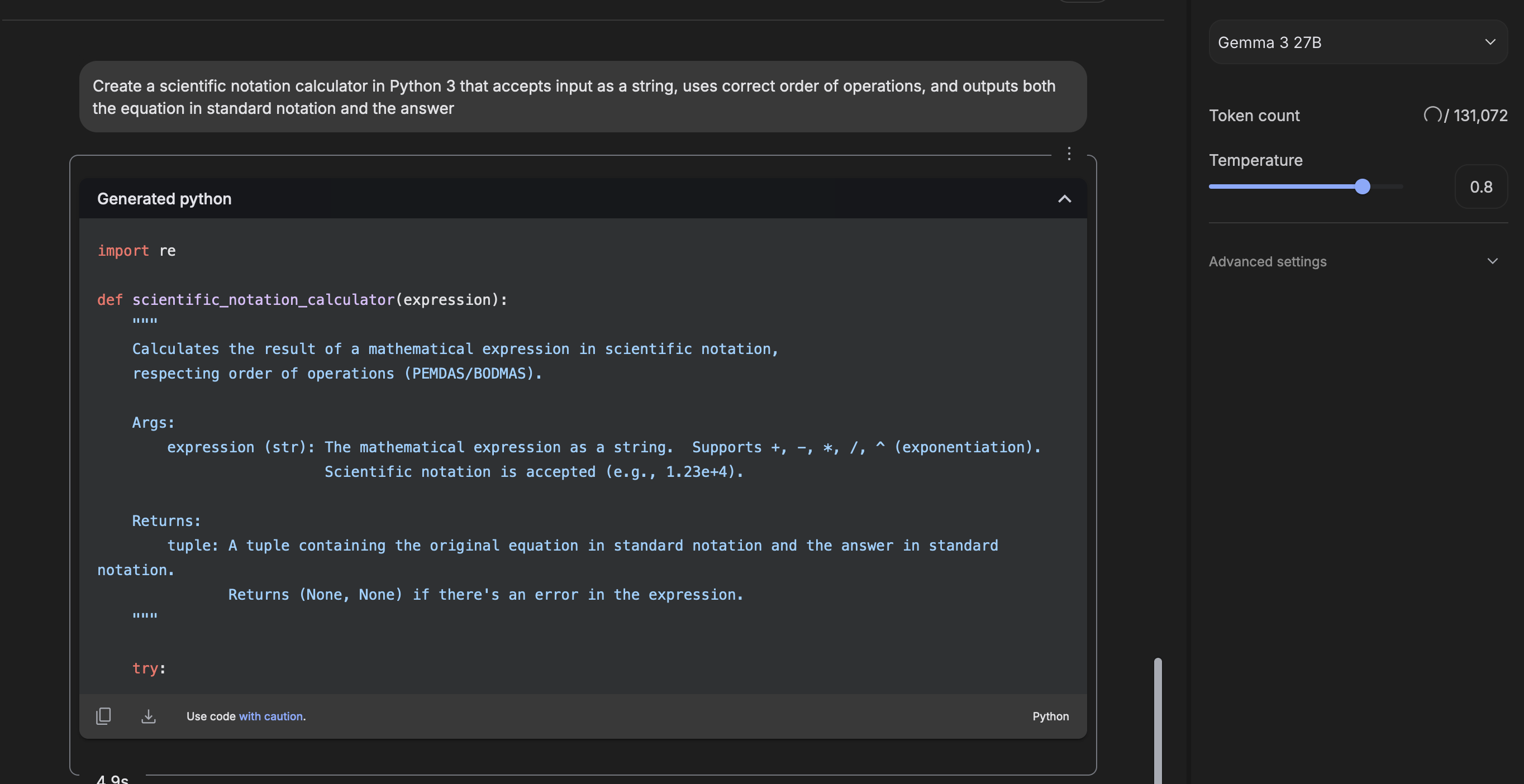

Gemma 3 generating Python code based on a simple prompt for a scientific notation calculator

Gemma 3 generating Python code based on a simple prompt for a scientific notation calculator

What can I use it for?

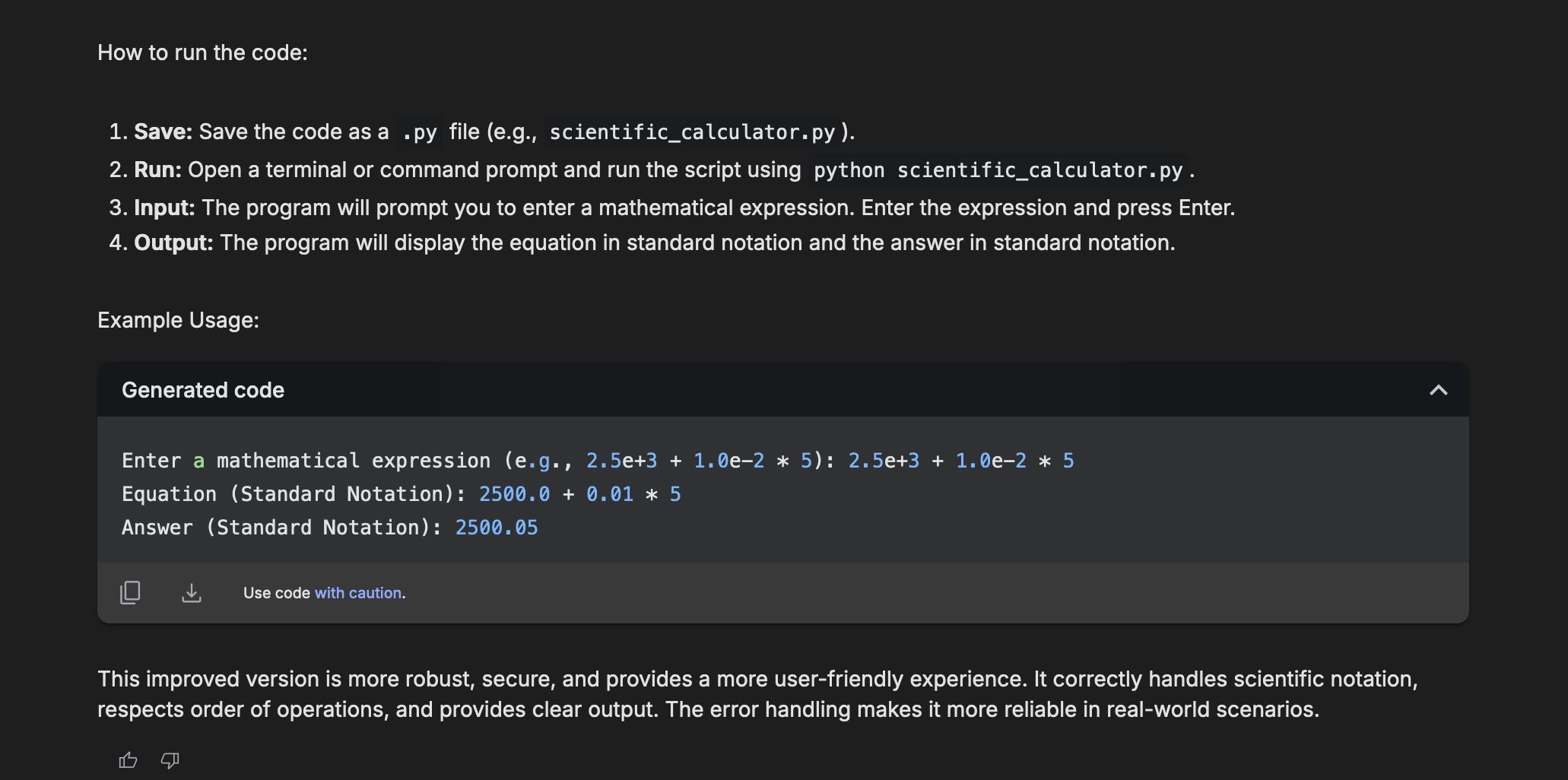

We found that Gemma 3 models were really strong when it came to implementing simple, common programming patterns. Gemma is also a good candidate for AI-based apps.

- Code completion: Get suggestions based on the context of your program

- Debugging: Recognize common errors + respond accordingly

- Refactoring: Improve code structure and apply best practices while maintaining functionality

- App dev: Use in your apps (esp. the smaller 1B and 4B models) for on-device AI

- Agentic dev: Gemma 3 can be used with agents like Continue or Cursor for agentic dev tasks

- Semantic search: Generate embeddings for document search and discovery

- Multimodal processing: Gemma 3 can process both text and images using the built-in SigLIP image encoder

- Self-hosting: Run it on your own infra for data privacy

What we like about it

Gemma 3 is an easy-to-onboard LLM for most general, simple tasks. The 27B model is especially impressive, and ranks 9th globally on Chatbot Arena on only a single GPU. We liked having an LLM we could easily run locally.

As a non-reasoning model, Gemma 3 was great for simple programming tasks; we used it as a secondary model for less complex code gen and ops.

We also appreciated how accessible Gemma 3 was when it came to fine-tuning for more advanced use cases. This is a huge plus both for using it for programming tasks, and customizing it as your LLM for AI-based apps.

Gemma 3 explaining its code output

Gemma 3 explaining its code output